|

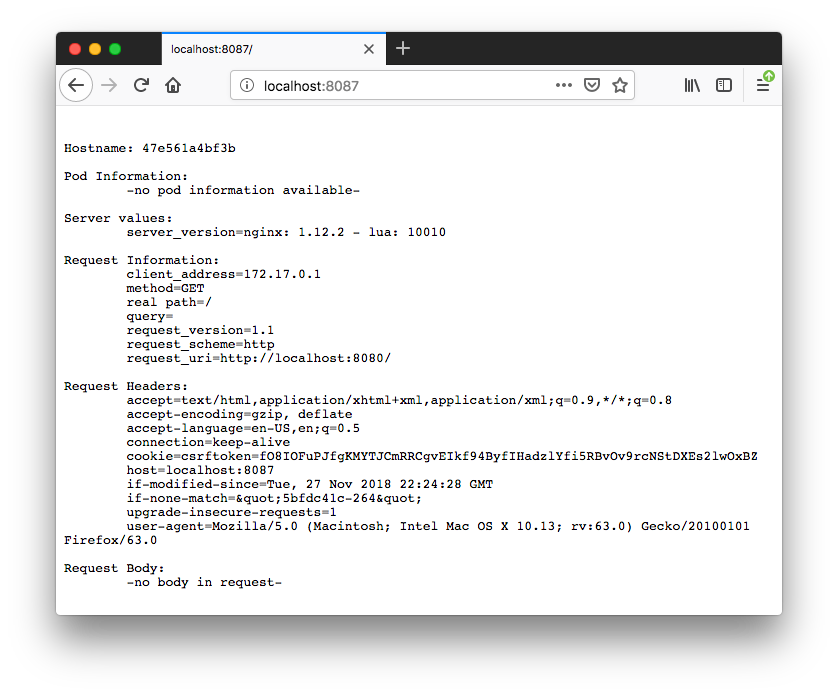

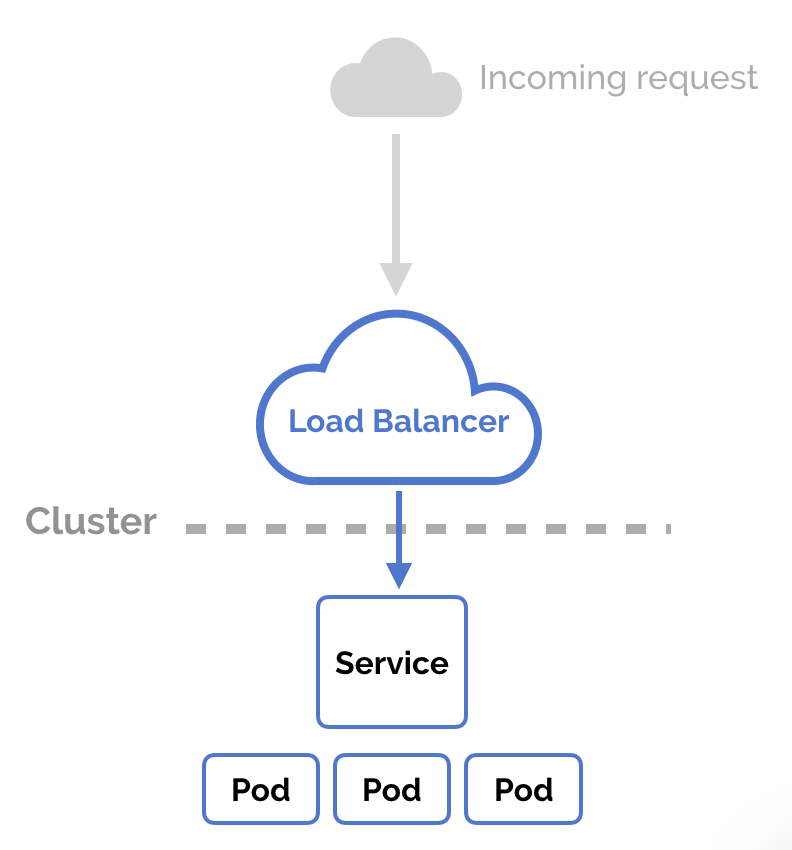

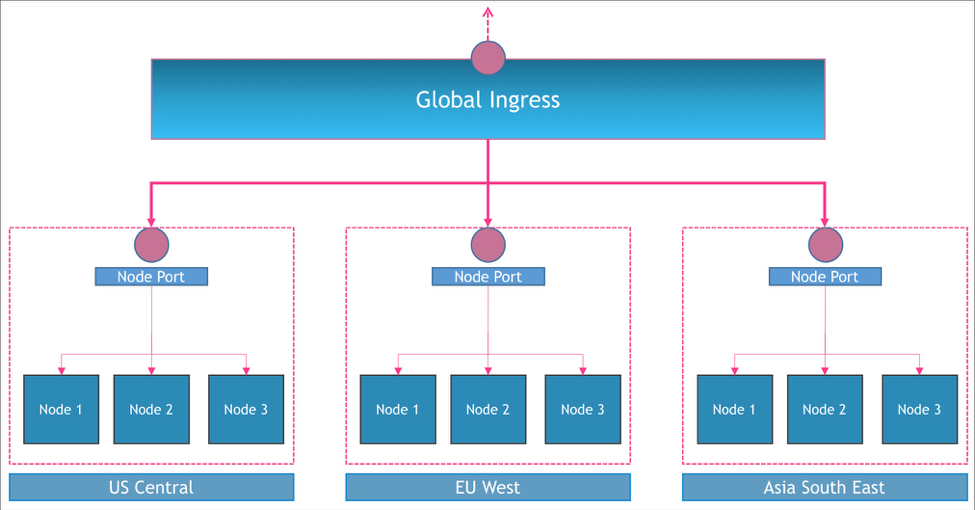

A1b2c3d4e5f6.ngrok.io Dont close this terminal Once you quit ngrok, the temporary domain is purged. Copy/paste the temporary domain, e.g. Skaffold docker for mac / windows version: 0.0.4. K8s nginx ingress for Docker for Mac / Windows. Nginx ingress default backend nginx ingress rewrite-target k8s traefik ingress for k3d k3s. Traefik ingress default backend traefik ingress PathPrefixStrip resources features.In this post, I’ll build on that foundation with the purpose of opening up your mind to the possibilities of options for: Ingress 103: Productionalizing Kubernetes Ingress (delayed, ETA mid-2020)In the previous posts, I purposefully left out some advanced implementation options as I wanted to keep a nice easy to follow flow of related topics that could help develop a strong foundational mental schema. Ingress 102: Kubernetes Ingress Implementation Options (this article) Ingress 101: What is Kubernetes Ingress? Why does it exist? Installation Guide Start by creating the mandatory resources for Nginx Ingress in your cluster. This blog post is the 3rd in a 4 part series with the goal of thoroughly explaining how Kubernetes Ingress Works:Assuming you have Kubernetes and Minikube (or Docker for Mac) installed, follow these steps to set up the Nginx Ingress Controller on your local Minikube cluster.externalTrafficPolicy: “Cluster” has greater compatibility with different Kubernetes Implementations compared to “Local”. Setting to “Cluster” will Load Balance traffic equally between all nodes. If you don’t specify anything, externalTrafficPolicy will default to “Cluster” (can also be written as externalTrafficPolicy: “”).Pods get an incorrect Source IP due to SNATing, they see Kubernetes Node IP as the Source IP. This can create a lack of consistent network stability in environments with lots of node reboots (spot instances or constant scaling up and down of the total number of nodes). Incoming traffic will be load balanced across all nodes.

Traffic is only sent to the subset of Nodes that have Ingress Controller pods running on them. (It could be the case that instead of incorrectly seeing the Kubernetes Node IP as the Source, a pod would incorrectly see the External LB’s IP as the Source, further configuration can usually fix this.) Pods have a better chance of getting the correct Source IP. Setting to “Local” will Load Balance traffic to the subset of nodes that have Ingress Controllers running on them. “Local” refers to the fact that a Node will only forward incoming NodePort traffic to a pod that exists locally on that host. Best image editing tool for macexternalTrafficPolicy: “Local” is supported by fewer Kubernetes Implementations than “Cluster”. Sending pods to extra stable nodes can be accomplished using node affinity statements or a daemonset with node label selectors.) (You would increase stability by only deploying ingress controller pods on nodes that are extra stable. This can increase networking stability, in environments with lots of node reboots, which could be caused by using spot instances or cluster autoscaler with several node tiers. The External LB then targets these Nodes, and if traffic goes to a Node that’s not running an Ingress Controller, that node drops the traffic.) (This is accomplished by creating a /healthz endpoint on a new NodePort for Nodes that are running an Ingress Controller. Docker Nginx Ingress Update Could LowerYou can have an Ingress Controller listen directly on port 443/80 of the host node, which is very useful for minikube and Raspberry Pie setups that might not have access to a LoadBalancer. A pod that specifies this will work even if CNI isn’t working.(Control Plane components running as pods use this setting for that reason.) Note: If you enable this setting, you’ll also need to set the Ingress Controller Pod’s dnsPolicy spec field to ClusterFirstWithHostNet , so that it can resolve Inner Cluster DNS names. This bypasses Kubernetes Services and Container Network Interfaces altogether. If the pod is listening for traffic on port 443, the host node will be listening for traffic on port 443, and traffic that comes in on port 443 of the host node will be mapped to the pod. I’ve seen Kubernetes patch updates cause regressions that break this, so it could create more stability when you’re on a working version, but then an update could lower stability if it introduces a regression.Option 3: Pod directly exposed on the LAN using hostNetwork: “true”HostNetwork: “True” will give a pod the same IP address as the host node and directly expose the pod on the LAN network.

Inlets is the only universal solution for publicly exposing an Ingress Controller, it’ll work on a cluster hosted at home, on a raspberry pie, on-prem, or in the cloud. The server doesn’t need a route to the private inner network that it will forward traffic to, but the private inner network will need a route to the server. The Private Inner network could exist on the Public Cloud, on Premises, behind multiple layers of Carrier Grade NAT (no chance at having a DHCP Public IP with dynamic DNS and port forwarding configured), a Kubernetes Inner Cluster Network, behind a Minikube Linux VM, behind a OpenBox Virtual Network NAT hosted on a Laptop that’s connected to the internet via an LTE uplink supplied by a cell phone’s mobile wifi hotspot. Bypassing Kubernetes Services and CNI means these pods also bypass Kubernetes Network Policies.Option 4: Use an Inlets Server to expose a Cluster IP Ingress Controller Service on the Internet or a LAN:Note: Inlets, by CNCF Ambassador Alex Ellis, is not a Kubernetes Native solution however, it happens to work great with Kubernetes.Inlets is a universal solution to ingress that allows you to forward traffic coming to an Inlets Server (hosted on the Public Internet or a LAN) to a service hosted on a normally unreachable private inner network.

0 Comments

Leave a Reply. |

AuthorPreston ArchivesCategories |

RSS Feed

RSS Feed